In this article I am going to detail the steps, to add the Conda environment to your Jupyter notebook.

Step 1: Create a Conda environment.

conda create --name firstEnvonce you have created the environment you will see,

Step 2: Activate the environment using the command as shown in the console. After you activate it, you can install any package you need in this environment.

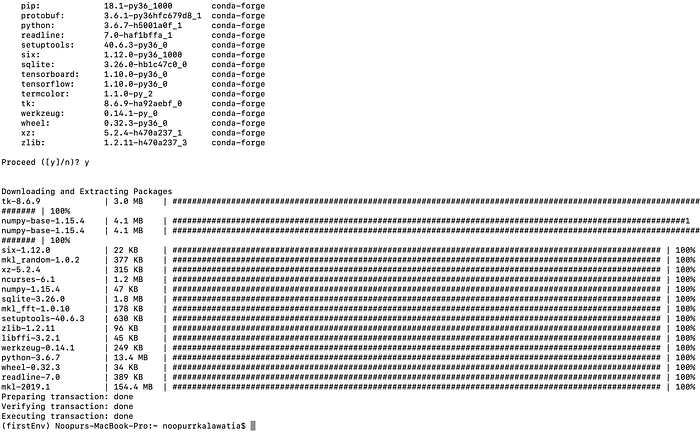

For example, I am going to install Tensorflow in this environment. The command to do so,

conda install -c conda-forge tensorflow

Step 3: Now you have successfully installed Tensorflow. Congratulations!!

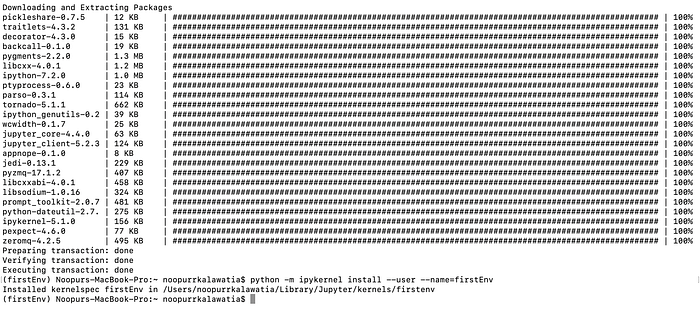

Now comes the step to set this conda environment on your jupyter notebook, to do so please install ipykernel.

conda install -c anaconda ipykernelAfter installing this,

just type,

python -m ipykernel install --user --name=firstEnvUsing the above command, I will now have this conda environment in my Jupyter notebook.

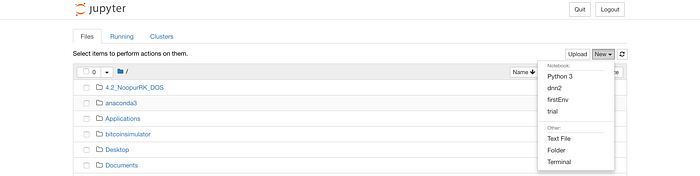

Step 4: Just check your Jupyter Notebook, to see the shining firstEnv.

USAGE:

List kernels

Use jupyter kernelspec list

$ jupyter kernelspec list

Available kernels:

global-tf-python-3 /home/felipe/.local/share/jupyter/kernels/global-tf-python-3

local_venv2 /home/felipe/.local/share/jupyter/kernels/local_venv2

python2 /home/felipe/.local/share/jupyter/kernels/python2

python36 /home/felipe/.local/share/jupyter/kernels/python36

scala /home/felipe/.local/share/jupyter/kernels/scala

Remove kernel

Use jupyter kernelspec remove <kernel-name>

$ jupyter kernelspec remove old_kernel

Kernel specs to remove:

old_kernel /home/felipe/.local/share/jupyter/kernels/old_kernel

Remove 1 kernel specs [y/N]: y

[RemoveKernelSpec] Removed /home/felipe/.local/share/jupyter/kernels/old_kernel

Change Kernel name

1) Use

$ jupyter kernelspec listto see the folder the kernel is located in2) In that folder, open up file

kernel.jsonand edit option"display_name"

Yayy!! Happy coding :)

Comments

Post a Comment